[ This article was previously written and published by Ira as part of the History of Computing at Cornell. Ira holds the copyright and has given his kind permission for its publication again on his class website- Ed ]

These notes recount some of my experiences as a student at Cornell, both as a part time employee of the Computer Center through student financial aid, as a student programming for fun, and as a part time employee in a theoretical physics project. At the time I had no idea how much influence this experience would have, but it changed my life in unexpected ways so I have included at the end an account of how my career in biomedical computing came out of this exposure.

When I began my undergraduate studies at Cornell in 1961, I had just begun to learn about computing. It was a secondary interest, not closely related to my interest in mathematics and physics. I found out that the Computer Center provided free student access to its Burroughs 220 computer. I taught myself Burroughs 220 assembly language and began my first programming project, not for a class, but just out of interest in what could be done with computers, other than arithmetic. I and my friend from high school, Eric Marks, had discovered a small book by Lejaren Hiller and Leonard Isaacson, called ""experimental Music". Hiller and Isaacson’s work resulted in a composition, "Illiac Suite for String Quartet," named for the computer at the University of Illinois that they used. The goal of Eric’s and my project was to replicate some of their experiments in automatically composing music according to rules of harmony, voice leading, and also some more modern composition techniques. I soon realized that neither assembly language nor languages like Algol and FORTRAN were suitable for this task. I did not know at the time that a very powerful programming language called Lisp had just been invented to express exactly these kinds of symbolic, abstract ideas. I also did not know that this kind of programming was called "artificial intelligence." Nevertheless, it was my start. The project was an utter failure. I had endless trouble creating a suitable encoding system for the notes, chords, etc.

The following year, I was privileged to be offered a part time job as a night operator, through student financial aid. I had two wonderful teachers, Amos Ziegler, and George Petznick. Amos taught me how to operate the Burroughs in a production style, as well as a modest amount of console troubleshooting. I learned how to put machine language instructions in throught the console buttons, as well as write little programs that would flash messages on the 4x10 light arrays of the console registers. Amos taught me how to operate the big tape drives (1 inch wide tape), and I had my own tape for my personal programming experiments.

Ira Kalet at the Burroughs 220 console in 1962

In view of all of these accomplishments it’s interesting to note that he considers his greatest achievements to be (in this order):

One of the benefits of the job was that we student operators also got instruction in programming, algorithms and data structures from George Petznick. We met once a week with George. He was a model of clarity, and gave us his best. I am sorry to say, I didn’t put the same commitment in. One time, he assigned us to write a sorting algorithm (in FORTRAN) that would sort 100 random integers. I hadn’t set aside time to work on it, so at the last minute just before the little class met, I punched up an 8 line program that implemented the simplest thing I could think of, which was, as it turned out, a variant of the bubble sort algorithm. When I presented it in class, George laughed and said it would be very slow. We ran it. It took 8 minutes to do the 100 numbers. George’s sort program (the algorithm for which I don’t recall, perhaps mergesort or quicksort) took under 6 seconds. However, I refused to be embarrassed. My program was horribly inefficient, but it was 100% correct, and it took only a few minutes to write. This set a precedent, which I still follow, and teach, today, which is to get it right first, and make it efficient afterward.

That year I took Electricity and Magnetism, as well as Applied Mathematics courses, and there were assignments requiring numerical computation. They were not intractable by hand, but one seemed tedious and I thought it could be easily programmed. The problem was to solve an electrostatics boundary value problem by "relaxation". This used the following property of functions that are solutions of Laplace’s equation: the value of the function at a point is equal to the average of the values on a boundary surrounding the point. One can start with a crude approximation that matches the boundary values, and then iterates the internal values until the results don’t change much in any iteration. I wrote a small program to solve the problem, several days before the homework was due. This one (unlike my first) had lots of errors. I was showing up at the Computer Center, handing a small deck of cards to Amos, which he would very kindly slip into the batch stream, run my job, and bring me back the printout from the IBM 407 line printer (kachunk, kachunk), and I would run off to a quiet place to figure out what was still wrong. After many iterations, I thought I had everything right, but it was ten minutes to class time. I brought my deck to Amos, and he put it in. The line printer printed out intermediate results as the program ran, and as I watched the obviously endless loop, I realized I had forgot to divide by the number of boundary points when computing the average! I wanted to fix it quickly and make one more run, so I could turn in this very "cool" solution to the homework. But Amos had had enough. He said he was just too busy, and chased me out, though kindly. I turned in the incomplete assignment, but I got an A in the course anyway. From that I learned the value of interactive programming.

My next adventure happened during my senior year, when I obtained a part time job as a programmer for a researcher in the Cornell Physics Department. I am sorry to say I don't remember his name. He was a theorist, doing some numerical calculations applying what was then known as elementary particle phenomenology. From general principles of Quantum Field Theory and some specifics about the known elementary particles, some things could be approximately computed, even though the full theory could not be solved. He wrote a program to compute the pi meson form factor, and asked me to make a few changes and additions. The program, in FORTRAN, ran on the Control Data 1604. This being a research project, his grant paid for the computer time. However, since I had lots of operator experience, I was able to schedule to come in during the evening and run the CDC 1604 myself. After getting the program into good shape, he asked me to run it for a series of energy transfer values, I think they were something like 1, 5, 10, 25 and 50 GeV. However I got the indexing wrong and the run, which took several hours of chargeable night running time, produced values for 1, 2, 3, 4, 5 GeV instead. When I discovered my mistake I was so embarassed I wrote an apology and resignation note, and delivered that and the output to the researcher. He was amused, not angry. His comment was, well, now we have lots of detailed results for the lower energy range. However he accepted my resignation anyway, which was fine because at that point I needed to get caught up on homework and study for final exams.

The CDC 1604 was a binary oriented machine with 60 bit words, and octal display registers, as opposed to the Burroughs binary coded decimal organization. One thing that was fun about the CDC was that an amplifier and speaker were attached to one of the register bits, so that you could "hear" the machine (when the bit modification rate was in the audio range...). Of course this led to people writing programs to play music on the console speaker. Remember, this was 1964, well before MIDI and all the wonderful later programs for generating electronic music. However, I was struck by how straightforward this task seemed, compared to the problem of automated music composition, at which I had failed earlier.

I went on to graduate study in theoretical physics at Princeton, and my involvement with computing was dormant, until 1978, ten years after completing my Ph.D. I had decided to pursue training in medical physics at the University of Washington Radiation Oncology Department. Within a year or so, I found a niche in the department, because of my prior experience with computing. I wrote some assembly language scanning device control programs. Then I participated in writing an interactive simulation system for designing radiation treatments for cancer (so-called RTP programs). When I became a faculty member, I convinced the department chair, Tom Griffin, to buy a VAX11/780 computer and a frame buffer graphic display for the department. I was strongly supported by George Laramore, who also had left a career in theoretical physics, though George actually went to medical school and became a radiation oncologist. We got the VAX, I became the chief cook and bottle washer, programmer, system administrator etc. I hired Jonathan Jacky to work with me. Jon had just finished a postdoc in physiology, and like me had a nascent interest in computing.

Jon and I had to quickly get some programs running, and they had to be correct, since the calculations would be used for planning treatment for real patients in the clinic. We wrote programs for managing data that were table driven so that the program itself could be kept simple. The handling of data by these programs was horribly inefficient (sound familiar?) but we got the whole set running in 7 months, complete with interactive display of X-ray cross sectional images. The design of the data entry program and the overall design of the whole suite were innovative and warranted publication (in Communications of the ACM, 1983, and Computer Programs in Biomedicine, 1982). So, the class with George Petznick was the first link to my newly emerging career in computing.

Meanwhile, I became acquainted with Steve Tanimoto and Alan Borning at the UW Computer Science Department. From Alan I learned about artificial intelligence, and together with graduate student Witold Paluszynski, began to work on innovative applications of artificial intelligence to the radiation treatment planning problem. Of course in the meantime I learned Lisp, and then I got some perspective on the experimental music project of my freshman year at Cornell. The application of AI to RTP turned out to be far more tractable. It (and other applications of AI in medicine and biology) has become the focus of my more recent career, in Biomedical and Health Informatics.

Since then we have gone through many generations of computers, and Jon has moved on to Mechanical Engineering where he is working on software for a magnetic resonance force microscope. Meanwhile, George Laramore took over the Radiation Oncology Department as chair following Tom Griffin, and has continued to strongly support our little group of pioneers in computing in radiation oncology. I am now full Professor of Radiation Oncology, and Professor of Medical Education and Biomedical Informatics, one of the founders of the University of Washington Biomedical and Health Informatics graduate program, as well as an adjunct faculty member in the UW Department of Computer Science and Engineering since 1983. I've just finished a book, called "Principles of Biomedical Informatics", to be published by Academic Press (Elsevier) in October 2008. My computing experiences as an undergraduate at Cornell played a significant role in what has turned out to be a fun and wonderful career. I am grateful to Amos Ziegler and George Petznick for the opportunity they provided to me way back then.

Ira Kalet

University of Washington

http://www.radonc.washington.edu/faculty/ira/

June, 2008

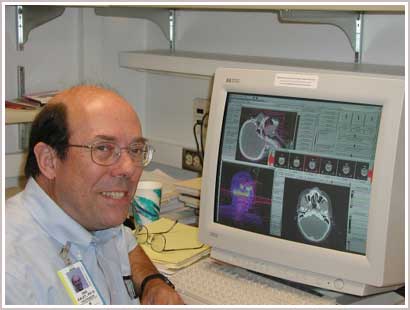

Ira Kalet in 2004